Particle physics is the epitome of ‘big science’. To answer our most fundamental questions out about physics requires world class experiments that push the limits of whats technologically possible. Such incredible sophisticated experiments, like those at the LHC, require big facilities to make them possible, big collaborations to run them, big project planning to make dreams of new facilities a reality, and committees with big acronyms to decide what to build.

Enter the Particle Physics Project Prioritization Panel (aka P5) which is tasked with assessing the landscape of future projects and laying out a roadmap for the future of the field in the US. And because these large projects are inevitably an international endeavor, the report they released last week has a large impact on the global direction of the field. The report lays out a vision for the next decade of neutrino physics, cosmology, dark matter searches and future colliders.

P5 follows the community-wide brainstorming effort known as the Snowmass Process in which researchers from all areas of particle physics laid out a vision for the future. The Snowmass process led to a particle physics ‘wish list’, consisting of all the projects and research particle physicists would be excited to work on. The P5 process is the hard part, when this incredibly exciting and diverse research program has to be made to fit within realistic budget scenarios. Advocates for different projects and research areas had to make a case of what science their project could achieve and a detailed estimate of the costs. The panel then takes in all this input and makes a set of recommendations of how the budget should be allocated, what should projects be realized and what hopes are dashed. Though the panel only produces a set of recommendations, they are used quite extensively by the Department of Energy which actually allocates funding. If your favorite project is not endorsed by the report, its very unlikely to be funded.

Particle physics is an incredibly diverse field, covering sub-atomic to cosmic scales, so recommendations are divided up into several different areas. In this post I’ll cover the panel’s recommendations for neutrino physics and the cosmic frontier. Future colliders, perhaps the spiciest topic, will be covered in a follow up post.

The Future of Neutrino Physics

For those in the neutrino physics community all eyes were on the panels recommendations regarding the Deep Underground Neutrino Experiment (DUNE). DUNE is the US’s flagship particle physics experiment for the coming decade and aims to be the definitive worldwide neutrino experiment in the years to come. A high powered beam of neutrinos will be produced at Fermilab and sent 800 miles through the earth’s crust towards several large detectors placed in a mine in South Dakota. Its a much bigger project than previous neutrino experiments, unifying essentially the entire US community into a single collaboration.

DUNE is setup to produce world leading measurements of neutrino oscillations, the property by which neutrinos produced in one ‘flavor state’, (eg an electron-neutrino) gradually changes its state with sinusoidal probability (eg into a muon neutrino) as it propagates through space. This oscillation is made possible by a simple quantum mechanical weirdness: neutrino’s flavor state, whether it couples to electrons muons or taus, is not the same as its mass state. Neutrinos of a definite mass are therefore a mixture of the different flavors and visa versa.

Detailed measurements of this oscillation are the best way we know to determine several key neutrino properties. DUNE aims to finally pin down two crucial neutrino properties: their ‘mass ordering’, which will solidify how the different neutrino flavors and measured mass differences all fit together, and their ‘CP-violation’ which specifies whether neutrinos and their anti-matter counterparts behave the same or not. DUNE’s main competitor is the Hyper-Kamiokande experiment in Japan, another next-generation neutrino experiment with similar goals.

Construction of the DUNE experiment has been ongoing for several years and unfortunately has not been going quite as well as hoped. It has faced significant schedule delays and cost overruns. DUNE is now not expected to start taking data until 2031, significantly behind Hyper-Kamiokande’s projected 2027 start. These delays may lead to Hyper-K making these definitive neutrino measurements years before DUNE, which would be a significant blow to the experiment’s impact. This left many DUNE collaborators worried about its broad support from the community.

It came as a relief then when P5 report re-affirmed the strong science case for DUNE, calling it the “ultimate long baseline” neutrino experiment. The report strongly endorsed the completion of the first phase of DUNE. However, it recommended a pared-down version of its upgrade, advocating for an earlier beam upgrade in lieu of additional detectors. This re-imagined upgrade will still achieve the core physics goals of the original proposal with a significant cost savings. With this report, and news that the beleaguered underground cavern construction in South Dakota is now 90% complete, was certainly welcome holiday news to the neutrino community. This is also sets up a decade-long race between DUNE and Hyper-K to be the first to measure these key neutrino properties.

Cosmic Implications

While we normally think of particle physics as focused on the behavior of sub-atomic particles, its really about the study of fundamental forces and laws, no matter the method. This means that telescopes to study the oldest light in the universe, the Cosmic Microwave Background (CMB), fall into the same budget category as giant accelerators studying sub-atomic particles. Though the experiments in these two areas look very different, the questions they seek to answer are cross-cutting. Understanding how particles interact at very high energies helps us understand the earliest moments of the universe, when such particles were all interacting in a hot dense plasma. Likewise, by studying the these early moments of the universe and its large-scale evolution can tell us about what kinds of particles and forces are influencing its dynamics. When asking fundamental questions about the universe, one needs both the sharpest microscopes and the grandest panoramas possible.

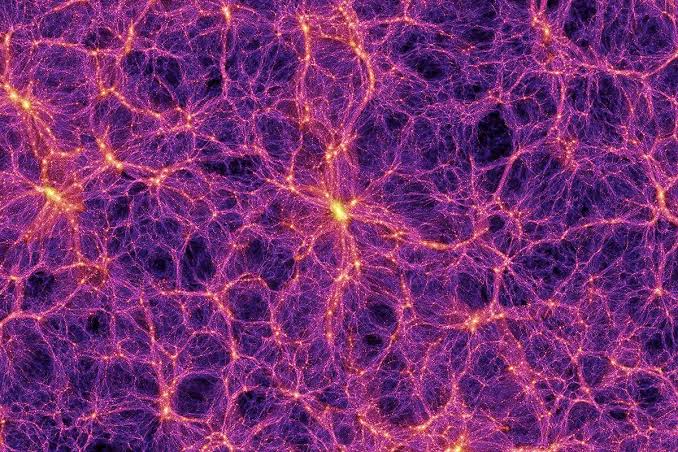

The most prominent example of this blending of the smallest and largest scales in particle physics is dark matter. Some of our best evidence for dark matter comes analyzing the cosmic microwave background to determine how the primordial plasma behaved. These studies showed that some type of ‘cold’, matter that doesn’t interact with light, aka dark matter, was necessary to form the first clumps that eventually seeded the formation of galaxies. Without it, the universe would be much more soup-y and structureless than what we see to today.

To determine what dark matter is then requires an attack from two fronts: design experiments here on earth attempting directly detect it, and further study its cosmic implications to look for more clues as to its properties.

The panel recommended next generation telescopes to study the CMB as a top priority. The so called ‘Stage 4’ CMB experiment would deploy telescopes in both the south pole and Chile’s Atacama desert to better characterize sources of atmospheric noise. The CMB has been studied extensively before, but the increased precision of CMS-S4 could shed light on mysteries like dark energy, dark matter, inflation, and the recent Hubble Tension. Given the past fruitfulness of these efforts, I think few doubted the science case for such a next generation experiment.

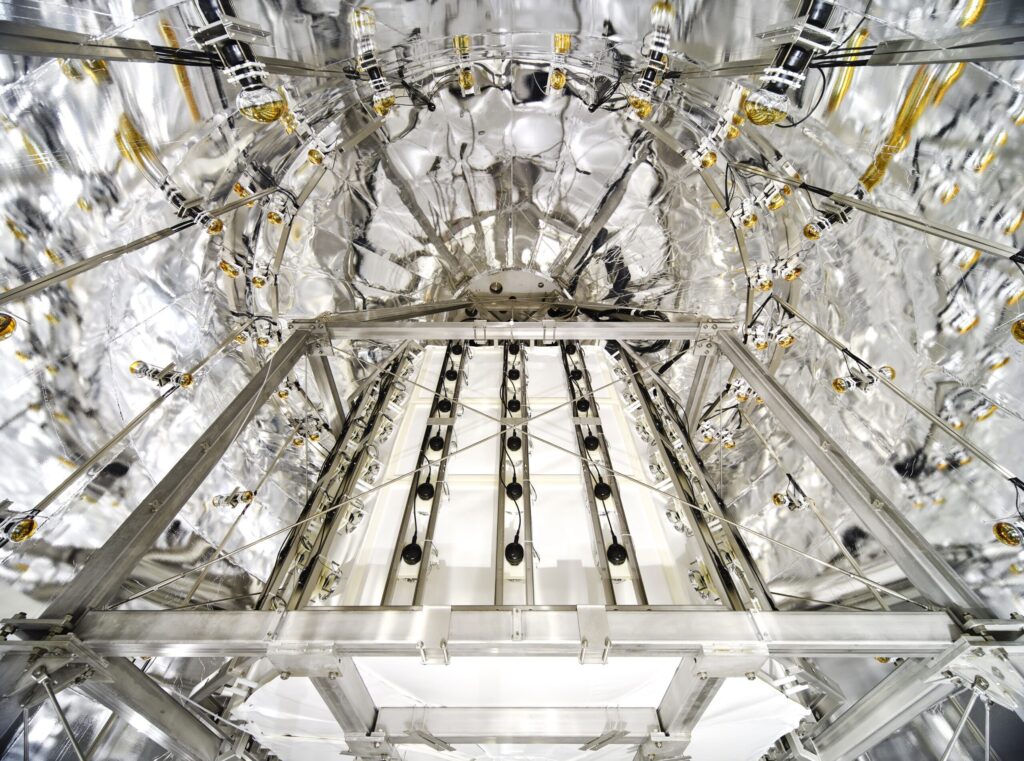

The P5 report recommended a suite of new dark matter experiments in the next decade, including the ‘ultimate’ liquid Xenon based dark matter search. Such an experiment would follow in the footsteps of massive noble gas experiments like LZ and XENONnT which have been hunting for a favored type of dark matter called WIMP’s for the last few decades. These experiments essentially build giant vats of liquid Xenon, carefully shield from any sources of external radiation, and look for signs of dark matter particles bumping into any of the Xenon atoms. The larger the vat of Xenon, the higher chance a dark matter particle will bump into something. Current generation experiments have ~7 tons of Xenon, and the next generation experiment would be even larger. The next generation aims to reach the so called ‘neutrino floor’, the point as which the experiments would be sensitive enough to observe astrophysical neutrinos bumping into the Xenon. Such neutrino interactions would look extremely similar to those of dark matter, and thus represent an unavoidable background which would signal the ultimate sensitivity of this type of experiment. WIMP’s could still be hiding in a basement below this neutrino floor, but finding them would be exceedingly difficult.

WIMP’s are not the only dark matter candidates in town, and recent years have also seen an explosion of interest in the broad range of dark matter possibilities, with axions being a prominent example. Other kinds of dark matter could have very different properties than WIMPs and have had much fewer dedicated experiments to search for them. There is ‘low hanging fruit’ to pluck in the way of relatively cheap experiments which can achieve world-leading sensitivity. Previously, these ‘table top’ sized experiments had a notoriously difficult time obtaining funding, as they were often crowded out of the budgets by the massive flagship projects. However, small experiments can be crucial to ensuring our best chance of dark matter discovery, as they fill in the blinds pots missed by the big projects.

The panel therefore recommended creating a new pool of funding set aside for these smaller scale projects. Allowing these smaller scale projects to flourish is important for the vibrancy and scientific diversity of the field, as the centralization of ‘big science’ projects can sometimes lead to unhealthy side effects. This specific recommendation also mirrors a broader trend of the report: to attempt to rebalance the budget portfolio to be spread more evenly and less dominated by the large projects.

What Didn’t Make It

Any report like this comes with some tough choices. Budget realities mean not all projects can be funded. Besides the pairing down of some of DUNE’s upgrades, one of the biggest areas that was recommended against were ‘accessory experiments at the LHC’. In particular, MATHUSULA and the Forward Physics Facility were two experiments that proposed to build additional detectors near already existing LHC collision points to look for particles that may be missed by the current experiments. By building new detectors hundreds of meters away from the collision point, shielded by concrete and the earth, they can obtained unique sensitivity to ‘long lived’ particles capable of traversing such distances. These experiments would follow in the footsteps of the current FASER experiment, which is already producing impressive results.

While FASER found success as a relatively ‘cheap’ experiment, reusing detector components from and situating itself in a beam tunnel, these new proposals were asking for quite a bit more. The scale of these detectors would have required new caverns to be built, significantly increasing the cost. Given the cost and specialized purpose of these detectors, the panel recommended against their construction. These collaborations may now try to find ways to pare down their proposal so they can apply to the new small project portfolio.

Another major decision by the panel was to recommend against hosting a new Higgs factor collider in the US. But that will discussed more in a future post.

Conclusions

The P5 panel was faced with a difficult task, the total cost of all projects they were presented with was three times the budget. But they were able to craft a plan that continues the work of the previous decade, addresses current shortcomings and lays out an inspiring vision for the future. So far the community seems to be strongly rallying behind it. At time of writing, over 2700 community members from undergraduates to senior researchers have signed a petition endorsing the panels recommendations. This strong show of support will be key for turning these recommendations into actual funding, and hopefully lobbying congress to even increase funding so that more of this vision can be realized.

For those interested the full report as well as executive summaries of different areas can be found on the P5 website. Members of the US particle physics community are also encouraged to sign the petition endorsing the recommendations here.

And stayed tuned for part 2 of our coverage which will discuss the implications of the report on future colliders!