Title: “New physics implications of recent search for $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$ at KOTO”

Author: Kitahara et. al.

Reference: https://arxiv.org/pdf/1909.11111.pdf

The Standard Model, though remarkably accurate in its depiction of many physical processes, is incomplete. There are a few key reasons to think this: most prominently, it fails to account for gravitation, dark matter, and dark energy. There are also a host of more nuanced issues: it is plagued by “fine tuning” problems, whereby its parameters must be tweaked in order to align with observation, and “free parameter” problems, which come about since the model requires the direct insertion of parameters such as masses and charges rather than providing explanations for their values. This strongly points to the existence of as-yet undetected particles and the inevitability of a higher-energy theory. Since gravity should be a theory living at the Planck scale, at which both quantum mechanics and general relativity become relevant, this is to be expected.

A promising strategy for probing physics beyond the Standard Model is to look at decay processes that are incredibly rare in nature, since their small theoretical uncertainties mean that only a few event detections are needed to signal new physics. A primary example of this scenario in action is the discovery of the positron via particle showers in a cloud chamber back in 1932. Since particle physics models of the time predicted zero anti-electron events during these showers, just one observation was enough to herald a new particle.

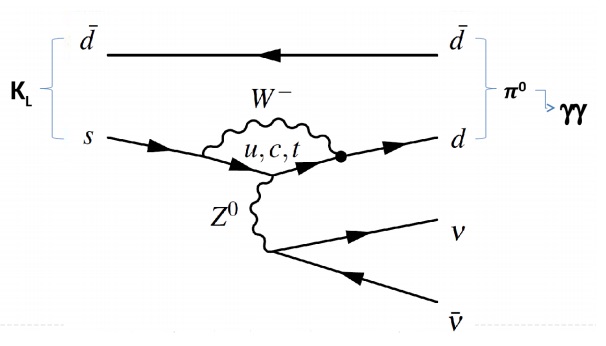

The KOTO experiment, conducted at the Japan Proton Accelerator Research Complex (J-PARC), takes advantage of this strategy. The experiment was designed specifically to investigate a promising rare decay channel: $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$, the decay of a neutral long kaon into a neutral pion, a neutrino, and an antineutrino. Let’s break down this interaction and discuss its significance. The kaon, a meson composed of an up quark and anti-strange quark, comes in both long and short varieties, describing the time of decay relative to each other. The Standard Model predicts a branching ratio of $latex 3 \times 10^{-11}$ for this particular decay process, meaning that out of all the neutral long kaons that decay, only this tiny fraction of them decay into the combination of a neutral pion, neutrino, and an antineutrino, making it incredibly rare for this process to be observed in nature.

Here’s where it gets exciting. The KOTO experiment recently reported four signal events within this decay channel where the Standard Model predicts just $latex 0.10 \pm 0.02$ events. If all four of these events are confirmed as the desired neutral long kaon decays, new physics is required to explain the enhanced signal. There are several possibilities, recently explored in a new paper by Kitahara et. al., for what this new physics might be. Before we go into too much detail, let’s consider how KOTO’s observation came to be.

The KOTO experiment is a fixed-target experiment, in which particles are accelerated and collide with something stationary. In this case, protons at energy 30 GeV collided with gold, producing a beam of kaons after other products are diverted with collimators and magnets. The observation of the desired $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$ mode is particularly difficult experimentally for several reasons. First, the initial and final decay products are neutrally charged, making them harder to detect since they do not ionize, a primary strategy for detecting charged particles. Second, neutral pions are produced via several other kaon decay channels, requiring several strategies to differentiate neutral pions produced by $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$ from those produced from $latex K_L \rightarrow 3 \pi^0$, $latex K_L \rightarrow 2\pi^0$, and $latex K_L \rightarrow \pi^0 \pi^+ \pi^-$, among others. As we can see in the Feynman diagram above, our desired decay mode has the advantage of producing two photons, allowing KOTO to observe these photons and their transverse momentum in order to pinpoint a $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$ decay. In terms of experimental construction, KOTO included charged veto detectors in order to reject events with charged particles in the final state and a systematic study of background events was performed in order to discount hadron showers originating from neutrons in the beam line.

This setup was in service of KOTO’s goal to explore the question of CP violation with long kaon decay. CP violation refers to the violation of charge-parity symmetry, the combination of charge-conjugation symmetry (in which a theory is unchanged when we swap a particle for its antiparticle) and parity symmetry (in which a theory is invariant when left and right directions are swapped). We seek to understand why some processes seem to preserve CP symmetry when the Standard Model allows for violation, as is the case in quantum chromodynamics (QCD), and why some processes break CP symmetry, as is seen in the quark mixing matrix (CKM matrix) and the neutrino oscillation matrix. Overall, CP violation has implications for matter-antimatter asymmetry, the question of why the universe seems to be composed predominantly of matter when particle creation and decay processes produce equal amounts of both matter and antimatter. An imbalance of matter and antimatter in the universe could be created if CP violation existed under the extreme conditions of the early universe, mere seconds after the Big Bang. Explanations for matter-antimatter asymmetry that do not involve CP violation generally require the existence of primordial matter-antimatter asymmetry, effectively dodging the fundamental question. The observation of CP violation with KOTO could provide critical evidence toward an eventual answer.

The Kitahara paper provides three interpretations of KOTO’s observation that incorporate physics beyond the Standard Model: new heavy physics, new light physics, and new particle production. The first, new heavy physics, amplifies the expected Standard Model signal via the incorporation of new operators that couple to existing Standard Model particles. If this coupling is suppressed, it could adequately explain the observed boost in the branching ratio. Light new physics involves reinterpreting the neutrino-antineutrino pair as a new light particle. Factoring in experimental constraints, this new light particle should decay in with a finite lifetime on the order of 0.1-0.01 nanoseconds, making it almost completely invisible to experiment. Finally, new particles could be produced within the $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$ decay channel, which should be light and long-lived in order to allow for its decay to two photons. The details of these new particle scenarios should involve constraints from other particle physics processes, but each serve to increase the branching ratio through direct production of more final state particles. On the whole, this demonstrates the potential for the $latex K_L \rightarrow \pi^0 \nu \bar{\nu}$ to provide a window to physics beyond the Standard Model.

Of course, this analysis presumes the accuracy of KOTO’s four signal events. Pending the confirmation of these detections, there are several exciting possibilities for physics beyond the Standard Model, so be sure to keep your eye on this experiment!

Learn More:

- An overview of the KOTO experiment’s data taking: https://arxiv.org/pdf/1910.07585.pdf

- A description of the sensitivity involved in the KOTO experiment’s search: https://j-parc.jp/en/topics/2019/press190304.html

- More insights into CP violation: https://www.symmetrymagazine.org/article/charge-parity-violation